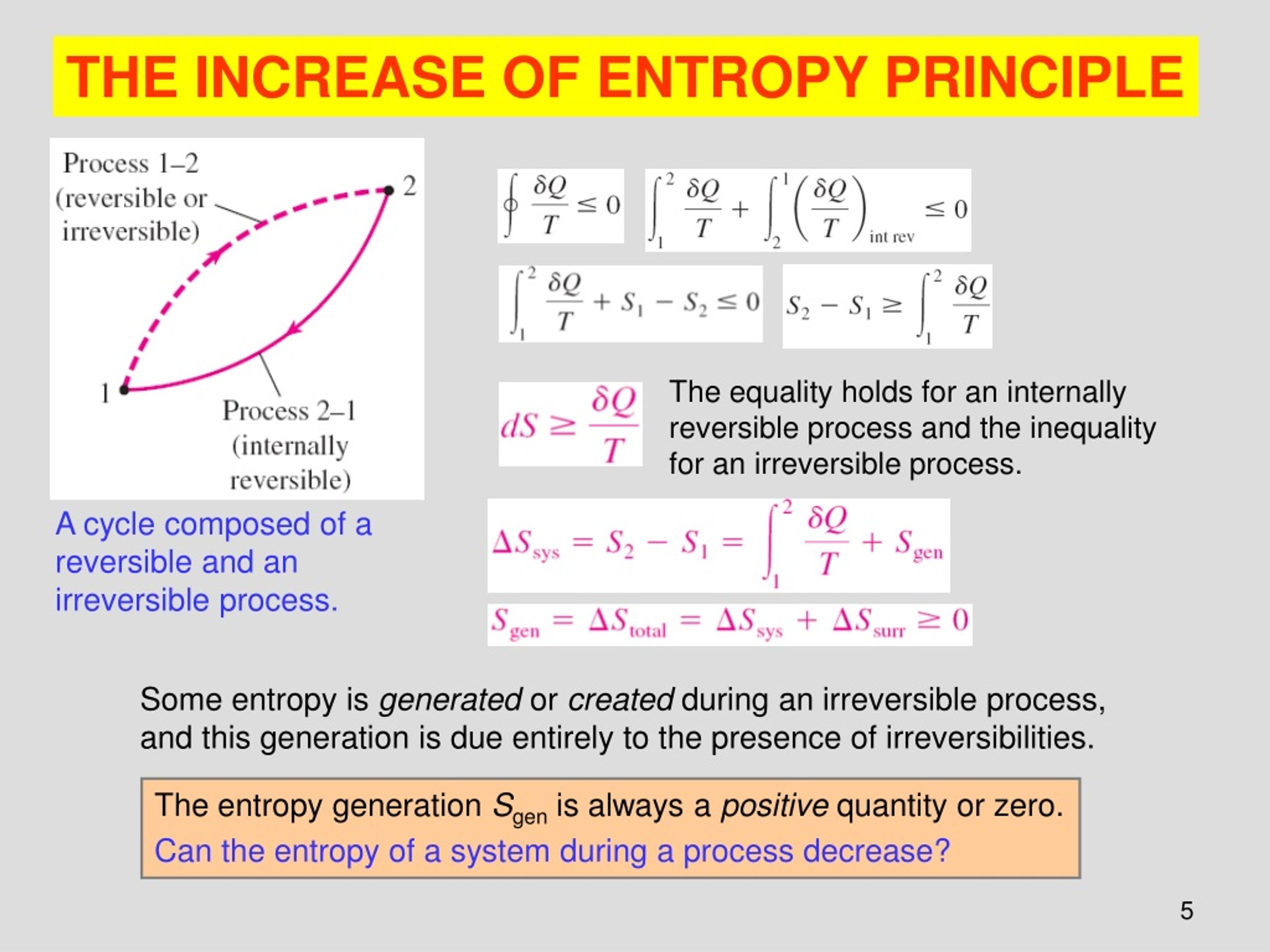

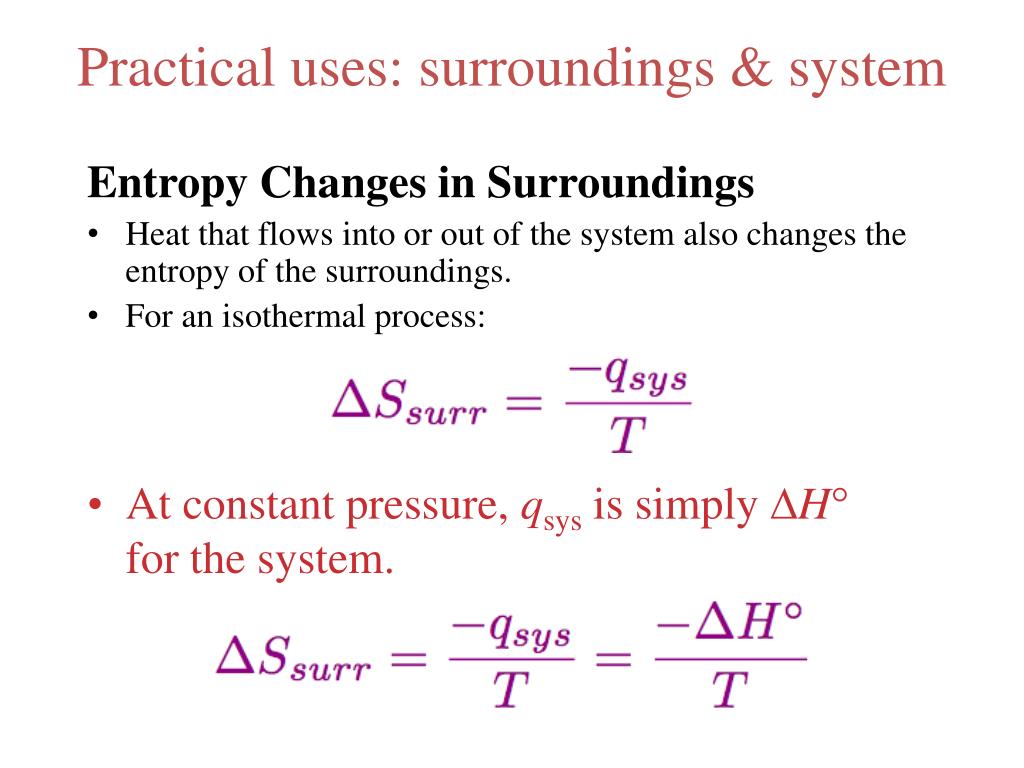

Importantly, we still use the reversible heat even when calculating change of an irreversible change between two states. While heat is usually a path function, only one reversible path exists between two states, making it a quasi-state function, like entropy. Importantly, the heat used to calculate entropy is that given off or absorbed if the given change was done reversibly. This formula for entropy tends to have the most use when measuring the change between two states: Thus, chemists can define entropy thermodynamically, using the flow of heat and the temperature of the system: Rather than deal with microstates, most chemists measure entropic values using calorimetry instead. In these instances, chemists often instead calculate entropy using the thermodynamic definition.

Thus, this definition has the most use for calculating a system’s microstates from known entropic values. However, counting individual microstates remains impossible in most systems. Notice that entropy comes in units of Joules per Kelvin. This statistical approach involves the following natural log formula relating entropy to microstates: Naturally, ordered, entropically lower systems have fewer possible microstates than disordered, entropically higher systems. Chemists define a microstate as a specific arrangement of matter and energy. The main way to quantify the orderliness of matter and energy involves summing the microstates a given system can have. Mathematical Representations of Entropy Statistical Definition The relatively low attraction between gas particles allows each molecule to move freely, resulting in a random dispersion. In chemistry, a gas provides another good example of an entropically-high system. Any motion and heat, similarly, are dispersed, resulting in a relatively consistent temperature and unpredictable movements of trees and animals. The mass of the trees, plants, rocks, and animals remains random and widely dispersed. The lattice energy of the crystal limits the motion of its particles, resulting in a perfectly geometric shape.Ī system high in entropy, by contrast, involves widely dispersed mass and energy. In chemistry, a solid mass of crystal provides another good example of a entropically-low system. Any heat energy remains controlled as well, with certain cold pockets, like the refrigerator, and hot pockets, like the oven, with different temperatures that don’t spread to the rest of the house. Any mechanical energy of motion, such as water and gas moving through pipes, remains tightly controlled and directed. The mass that makes up the house is ordered and exact to form the walls and furniture.

Low EntropyĪ system low in entropy involves ordered particles and directed motion. The letter “ ” serves as the symbol for entropy.Īs we’ll find out in a later section, entropy has a lot of use for chemists and physicists in determining the spontaneity of a process. This means that as a system changes in entropy, the change only depends on the entropies of the initial and final states, rather than the sequence (“path”) taken between the states.

Importantly, entropy is a state function, like temperature or pressure, as opposed to a path function, like heat or work. Topics Covered in Other ArticlesĮntropy is a measure of how dispersed and random the energy and mass of a system are distributed. In this article, we discover the meaning of entropy and its importance in thermodynamics, in both the universe and within a system.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed